|

11/15/2023 0 Comments Binary cross entropy

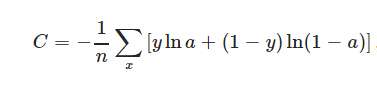

All you need to store is the 0.7 value because the other probability must be 1 – 0.7 = 0.3. For example, suppose predicted is (0.7, 0.3). The idea is that for a binary problem if you know one probability, you automatically know the other. Instead they’re stored as just one value. Again you can compute binary cross entropy in the usual way without any problem.īut, alas, in machine learning contexts, binary correct and predicted values are almost never encoded as pairs of values. Or suppose you have a different ML problem with correct = (1, 0) and predicted = (0.8, 0.2). Binary cross entropy can be calculated as above with no problem. This is a binary problem because there are only two outcomes. Suppose you have a coin flip with correct = (0.5, 0.5) and predicted = (0.6, 0.4). Now, unfortunately, binary cross entropy is a special case for machine learning contexts but not for general mathematics cases. As a minor detail, note that if the predicted probability values that corresponds to the correct distribution 1 value, you’d have a log(0) term which is negative infinity so a code implementation would blow up. Notice that when the correct probability distribution has one 1 and the rest 0s, all the terms in the cross entropy calculation drop out except for one because of the multiply by zero terms. In words, “the negative sum of the corrects times the log of the predicteds.”īut in machine learning contexts, the correct probability distribution is usually a vector with one 1 value and the rest 0 values, for example, (0, 1, 0). The basic math cross entropy error is computed as:Ĭe =. Put more accurately, cross entropy error measures the difference between a correct probability distribution and a predicted distribution. But they’re often a source of confusion for developers who are new to machine learning because of the many topics related to how the two forms of entropies are used.įirst, there’s general cross entropy error, which is used to measure the difference between two sets of two or more values that sum to 1.0. The basic ideas of cross entropy error and binary cross entropy error are relatively simple.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed